The Ai Energy Surcharge: Trump’s New Policy To Make Tech Giants Pay More For Data Center Power

Alright, let's talk about something that’s quietly humming in the background of our digital lives: energy. And not just the kind that powers your morning coffee maker (though that's crucial, obviously). We're diving into the colossal energy demands of the tech world, specifically those massive data centers that keep our Netflix streaming, our social feeds scrolling, and all those AI dreams humming along. And guess what? There’s a new buzz in town, a potential policy shift that could have us all thinking about where our digital bread is buttered, and who’s picking up the tab.

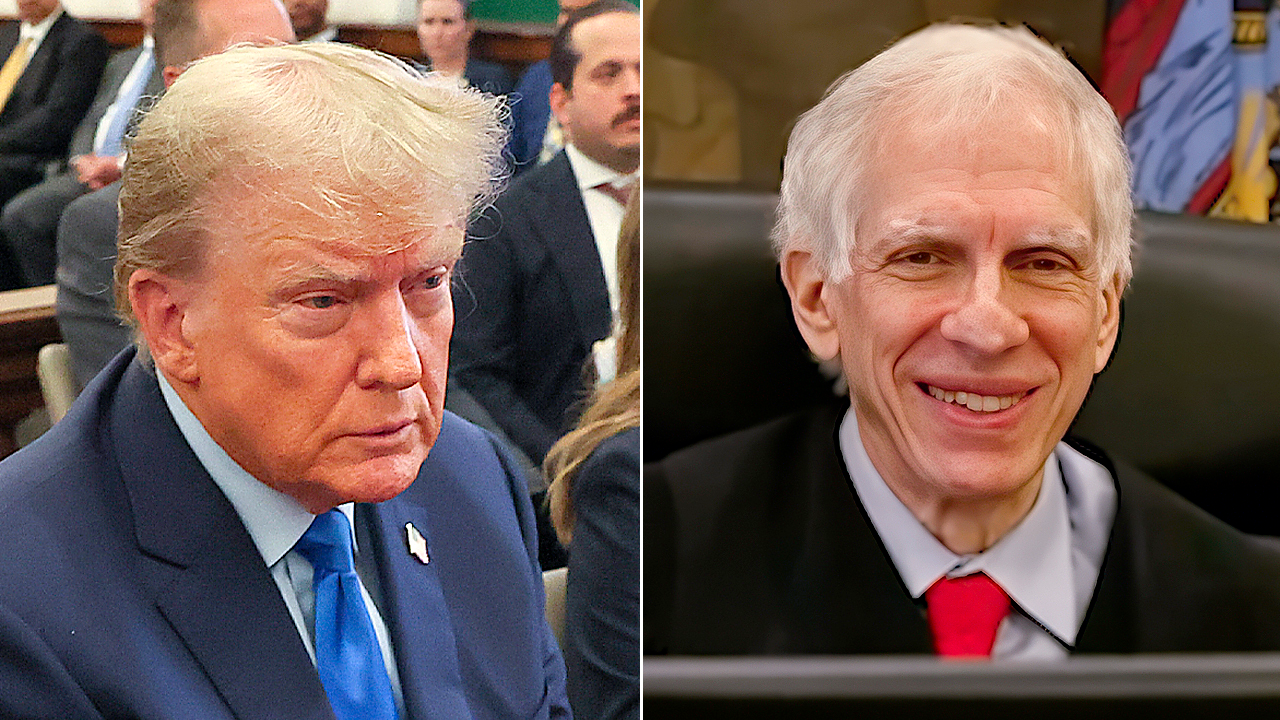

Picture this: Donald Trump, the man who’s never shy of making a statement, is reportedly mulling over a new policy. The gist? An AI Energy Surcharge. The idea is to make the titans of tech, the Googles, the Amazons, the Metas of the world, pay more for the electricity powering their ever-expanding data centers. Think of it as a little "thank you" tax for all that digital horsepower they’re consuming.

Why now, you ask? Well, artificial intelligence is no longer just a sci-fi concept. It’s here, it's learning, and it’s hungry. Training these sophisticated AI models requires an incredible amount of computing power, and that power, my friends, comes directly from the electrical grid. We’re talking about vast complexes, humming 24/7, gobbling up electricity like a kid at a candy store.

Must Read

These data centers are the unsung heroes (or perhaps the silent behemoths) of our digital age. They’re the physical embodiment of the cloud. Remember when we used to worry about losing our photos if our hard drive crashed? Now, everything is "in the cloud," and those clouds are made of servers, cables, and a whole lot of electricity. And the AI revolution? It’s like strapping a rocket engine to that already massive energy appetite.

So, the thinking behind this proposed surcharge is pretty straightforward: these tech giants are reaping enormous benefits from the digital economy, an economy increasingly powered by AI. And a significant chunk of that operational cost, the sheer juice needed to keep it all running, is electricity. The idea is that they should contribute more to the energy infrastructure that supports them, especially as their demands skyrocket.

The Scale of the Energy Beast

Let's get a little nerdy for a second, because the numbers are genuinely mind-boggling. Some estimates suggest that data centers globally could consume a significant percentage of the world’s electricity in the coming years. We're talking about percentages that rival entire countries! And AI? It's projected to supercharge that demand. Imagine the electricity needed to power every single smartphone in the world, then multiply that by… well, a lot. That’s the ballpark we’re playing in.

It’s not just about the servers themselves, either. These centers generate a ton of heat. Think of it like your laptop on overdrive. To keep those delicate machines from melting into puddles of silicon and regret, they need serious cooling systems. And those cooling systems? You guessed it: they also run on electricity. It’s a continuous cycle of power in, heat out, power to cool the heat. It’s a digital ouroboros of energy consumption.

The proponents of this surcharge argue that this massive energy draw puts a strain on existing grids, and in some cases, can even lead to increased reliance on fossil fuels if renewable sources aren’t scaling fast enough. So, the surcharge could serve a dual purpose: generating revenue and, perhaps, incentivizing these companies to invest even more heavily in sustainable energy solutions.

Think about it like this: when you use a lot of water, your water bill goes up. When you use a lot of electricity at home, your bill reflects that. This policy suggests that the tech giants, who are using orders of magnitude more electricity, should have a similar mechanism to acknowledge and contribute to that usage.

The Tech Giants' Perspective (and the Counterarguments)

Now, naturally, when you talk about making big companies pay more, there’s always a bit of a kerfuffle. The tech industry, for all its innovative prowess, is also a powerful lobbying force. They're likely to point out several things. For starters, they might argue that they are already making significant investments in renewable energy. Many of the major players have pledged to be carbon-neutral or carbon-negative, and they're pouring billions into solar farms, wind turbines, and other green initiatives to power their operations.

They could also argue that this surcharge could stifle innovation. If their operational costs skyrocket, it might mean less money for research and development, less funding for the next big AI breakthrough, or even higher prices for their services passed down to consumers. After all, who do you think ultimately pays for everything? Yep, us. The end-users.

Another point they might raise is the complexity of defining "AI energy consumption." How do you accurately measure the electricity used specifically for AI workloads versus, say, the energy used for email servers or website hosting? It's a technical challenge, to say the least. Imagine trying to track down every watt used by your Netflix binge versus your casual browsing. It’s a similar, albeit much larger, conundrum.

Furthermore, some critics might argue that such a surcharge could disproportionately affect smaller tech companies or startups that are also relying on cloud infrastructure. If the cost of powering that infrastructure goes up, it could be a bigger hurdle for them to overcome compared to the established giants with deeper pockets.

Cultural Echoes and Fun Facts

This whole discussion isn't entirely new. Throughout history, there have been debates about taxing industries that have a significant societal impact, whether it's tobacco, alcohol, or, more recently, carbon emissions. It’s the age-old question of who should bear the cost of progress and its byproducts. Think of the historical debates around industrialization and the need for regulations to address pollution. The AI energy surcharge feels like a modern-day echo of those ongoing conversations about corporate responsibility and environmental stewardship.

Here’s a fun fact for you: the world’s first data center was built by John Vincent Atanasoff and Clifford Berry in the late 1930s and early 1940s. It was called the Atanasoff-Berry Computer (ABC), and while it wasn't exactly powering AI, it was a pioneering step towards the massive computing power we have today. Imagine them trying to explain their energy needs to the local power company back then! I bet it would have been a lot less complicated than what we’re talking about now.

And speaking of power, did you know that some data centers are located in surprisingly cool places? To help with natural cooling, some are built near Arctic regions or even submerged underwater. Talk about thinking outside the box! Imagine the tech support calls for a data center at the bottom of the ocean. "Uh, yes, I think we have a slight humidity issue."

The idea of a surcharge also brings to mind the concept of "externalities" in economics. These are costs that are not directly paid by the producer or consumer but are borne by society as a whole. For example, the pollution from a factory is an externality that affects the environment and public health. In this case, the argument is that the massive energy consumption of data centers, and its potential impact on the grid and climate, could be considered an externality that the tech giants should help to mitigate.

Practical Tips for Navigating the Digital Energy Landscape

So, while this policy is still in the realm of discussion and debate, it does give us, as consumers and users of technology, something to ponder. How can we be more mindful of our own digital energy footprint? It might sound like a drop in the ocean compared to a data center, but collective action always matters.

Unplug when you can: That phone charger, that smart speaker, those little power bricks that are always plugged in, even when not in use, still draw a small amount of power. It’s called "vampire drain" or "phantom load." Think of it as your electronics having a midnight snack. So, a simple habit of unplugging unused chargers can make a difference over time.

Optimize your devices: Lowering screen brightness, enabling power-saving modes, and closing unnecessary apps can all help reduce the energy your personal devices consume. It’s like giving your phone a little nap when it doesn’t need to be working overtime.

Stream wisely: While we all love binge-watching, consider downloading content when possible, especially if you have an unlimited data plan. Streaming uses more energy than downloading and playing back a file. And maybe, just maybe, choose standard definition instead of 4K for content that doesn't absolutely require it. Your eyes might not notice the difference, but the energy grid might!

Choose greener tech providers: When you have the option, look for internet service providers, cloud hosting services, or even streaming platforms that are transparent about their energy usage and their commitment to renewable energy. Some companies are making genuine efforts to power their operations sustainably. A quick search can often reveal which ones are leading the pack.

Support policies that promote energy efficiency: This might be a bit more involved, but staying informed about energy policies, both at the local and national level, and supporting initiatives that encourage energy efficiency and renewable energy adoption can have a broader impact. It’s about more than just our personal habits; it’s about shaping the future of energy for everyone.

A Short Reflection

It's fascinating, isn't it? We live in a world where our digital lives have such tangible, physical consequences. The "cloud" isn't some ethereal realm; it's a vast network of servers powered by literal watts and kilowatts. And as AI continues to weave itself into the fabric of our existence, its energy demands will only grow. This proposed AI Energy Surcharge, whether it comes to fruition in its current form or not, serves as a potent reminder. It’s a prompt to consider the invisible infrastructure that underpins our modern convenience, and to think about who should be responsible for its upkeep and its impact. It’s a conversation that touches upon innovation, environmental responsibility, and the very real cost of powering our ever-evolving digital future. And in the grand scheme of things, it’s a conversation that affects us all, from the creators of the algorithms to the users scrolling through their feeds, all connected by the invisible, yet powerful, hum of electricity.