Diujap Statistics 5th Edition Answers 31

Hey there, stats whiz! Or maybe you're more of a "stats novice who accidentally stumbled here" kind of person. Either way, welcome! Today, we're diving headfirst into the exciting, the exhilarating, the (sometimes) slightly perplexing world of Diujap Statistics, 5th Edition. Specifically, we're going to be chatting about those ever-elusive answers to Chapter 31. Don't worry, we're not going to be pulling an all-nighter with a calculator the size of a minivan. This is going to be a chill, conversational romp through some of those trickier problems.

So, grab your favorite beverage – coffee, tea, maybe even a celebratory juice box because you made it to Chapter 31 – and let's get started. Think of me as your friendly study buddy, the one who actually skimmed the textbook and remembers where those pesky formulas live. We'll break down some of those brain-benders, sprinkle in a few jokes (because what’s statistics without a little existential dread masked by humor, right?), and hopefully leave you feeling a whole lot more confident. Or at least, less likely to throw your textbook out the window. You're welcome.

The Dreaded Chapter 31: What's the Big Deal?

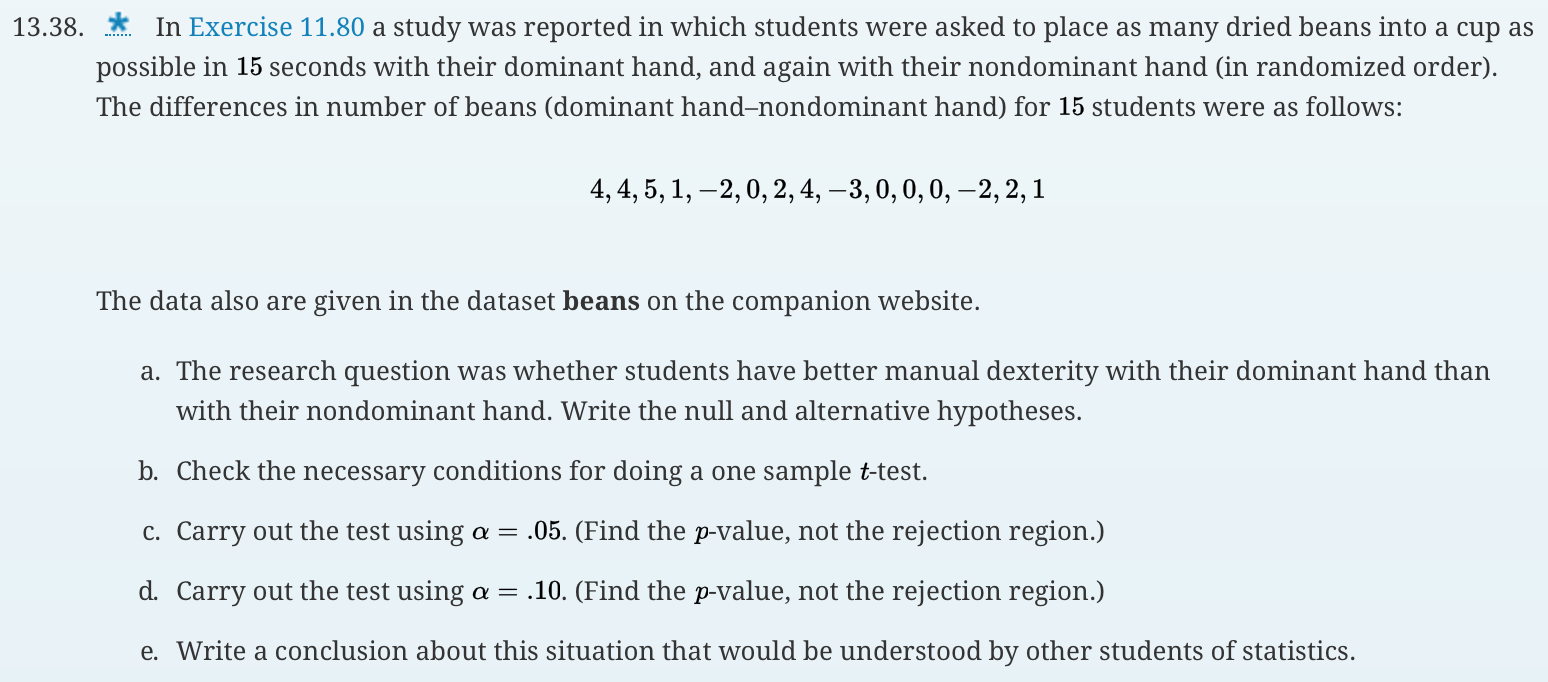

Alright, let's be honest. By the time you hit Chapter 31, you're probably feeling a mix of triumphant "I'm almost done!" and sheer "What fresh statistical hell is this?" It's that point where concepts start to get a little more abstract, a little more... intertwined. You've probably mastered p-values, wrestled with confidence intervals, and maybe even had a staring contest with a regression line or two. But Chapter 31? It often throws in some curveballs, maybe focusing on more advanced topics like hypotheses testing for proportions, ANOVA (Analysis of Variance), or Chi-Square tests. These are the big leagues, folks!

Must Read

The beauty (and sometimes the beast) of statistics is how everything builds. What you learned in Chapter 2 is probably subtly lurking in Chapter 31, disguised in a fancy new notation. So, if you’re feeling a little fuzzy on some earlier concepts, don’t beat yourself up. A quick peek back at those chapters might be just the ticket to unlock the mysteries of 31. Think of it as a superhero origin story – the earlier chapters are the origin, and Chapter 31 is the supervillain showdown!

Let's Tackle Some Common Chapter 31 Conundrums

Now, without getting too specific because, you know, copyright and all that jazz (plus, where's the fun in just handing over answers without a little explanation?), let’s talk about the types of problems you might be seeing. Imagine you’ve got a problem that’s all about comparing the proportions of two different groups. For instance, maybe you’re curious if a new marketing campaign led to a significantly higher proportion of customers clicking on an ad compared to the old campaign. This is where you’d likely be whipping out your two-proportion z-test.

The key here is to identify your two proportions, your sample sizes, and what you're trying to prove (your alternative hypothesis). Are you just looking for any difference, or are you specifically hoping one proportion is greater than the other? Getting these details right is like choosing the right tool for the job. You wouldn't use a hammer to screw in a screw, and you wouldn't use a one-proportion z-test for a two-proportion problem. See? It's all about precision!

And then there's the glorious world of ANOVA. ANOVA is your best friend when you want to compare the means of three or more groups. Think about testing different teaching methods on student test scores. Instead of doing a bunch of pairwise comparisons (which gets messy and increases your chance of a Type I error – the dreaded false positive!), ANOVA lets you look at the overall variation. Is there any significant difference among the group means, or is what you're seeing just due to random chance? It’s like a group hug for means, but a statistical one.

Remember those F-statistics and p-values you’ll be calculating? They’re telling you the story. A small p-value means you’re likely to reject that boring old null hypothesis (which usually says "all the means are equal") and conclude that at least one group is different. High five to that!

Now, who can forget the ubiquitous Chi-Square tests? These guys are fantastic for analyzing categorical data. You might be looking at whether there's an association between two categorical variables. For example, is there a relationship between someone's favorite ice cream flavor and their preferred movie genre? Or, in a more scientific context, is there an association between a certain treatment and a positive outcome in a clinical trial? The Chi-Square test of independence is your go-to for this.

And don't mix it up with the Chi-Square goodness-of-fit test! That one is for checking if your observed distribution of a single categorical variable matches an expected distribution. Imagine you expect a perfectly fair die to land on each number 1/6th of the time. The goodness-of-fit test helps you see if your actual die rolls are "good enough" to that expectation.

Decoding the "Answers 31" Vibe

So, when you’re staring at the answers to question 31, what should you be looking for? It's not just about matching numbers. It's about understanding the process. For a hypothesis test, you should see:

- A clear statement of the null hypothesis (H0) and the alternative hypothesis (Ha). These are the foundation of your test. Think of H0 as the default assumption (like "nothing interesting is happening") and Ha as what you're trying to find evidence for ("something interesting is happening!").

- The test statistic that was calculated (like a z-score, t-score, F-statistic, or Chi-Square statistic). This number summarizes your sample data in relation to the hypotheses.

- The p-value. This is the probability of observing your data (or more extreme data) if the null hypothesis were actually true. Small p-value = good evidence against H0.

- A conclusion. This is where you decide whether to reject or fail to reject the null hypothesis, based on your chosen significance level (often denoted by alpha, like 0.05). It should also be stated in the context of the problem. "We reject H0 because the p-value is less than 0.05, suggesting there is a statistically significant difference in X between groups Y and Z." (Or something to that effect!).

If you're dealing with a confidence interval, the answer will typically be a range of values. This range is your best estimate for the true population parameter. For example, a 95% confidence interval for a population proportion might be (0.55, 0.65). This means we're 95% confident that the true population proportion lies between 55% and 65%. It’s like saying, "We're pretty darn sure the real deal is in this ballpark!"

Common Pitfalls and How to Avoid Them (Hopefully!)

One of the biggest traps in Chapter 31 (and honestly, all of stats) is misinterpreting the p-value. Remember, a p-value is not the probability that the null hypothesis is true. It's the probability of seeing your data given that the null hypothesis is true. It’s a subtle but crucial difference. Think of it this way: if you’re baking cookies and they turn out a little lopsided, the p-value tells you how likely it is that you’d get lopsided cookies if your oven was perfectly calibrated. It doesn't tell you the probability that your oven is actually out of calibration.

Another common mistake? Confusing correlation with causation. Just because two things are related (statistically speaking!) doesn't mean one causes the other. There could be a lurking variable, or it could be pure coincidence. So, if your Chapter 31 problem shows a strong association between, say, ice cream sales and shark attacks, don't jump to the conclusion that eating ice cream makes sharks attack you. (Although, wouldn't that be a fun, albeit terrifying, study to design!). The summer heat is probably the real culprit for both!

Also, keep an eye on your assumptions. Most statistical tests have underlying assumptions that need to be met for the results to be valid. For example, many tests assume your data is normally distributed or that your samples are independent. If those assumptions are violated, your fancy calculated p-value might be as reliable as a chocolate teapot. So, if the problem mentions checking assumptions, pay attention!

Putting it All Together: The Joy of Understanding

Look, I know sometimes looking at statistics problems can feel like trying to decipher an ancient alien language. The symbols, the formulas, the jargon – it can be a lot. But here’s the secret sauce: understanding the why behind the math is what truly makes it click. When you get an answer to a Chapter 31 problem, don't just check if your number matches. Ask yourself:

- What question was this problem trying to answer?

- What statistical tool was the best fit for this question?

- What does the result mean in the real world?

When you can answer those questions, you’ve gone beyond just memorizing formulas. You've started to develop that incredible statistical intuition. You're not just plugging numbers into a machine; you're using powerful tools to uncover insights and make informed decisions. That’s a superpower, my friends!

So, as you navigate the rest of Chapter 31 and whatever statistical adventures lie ahead, remember this: every problem you solve, every concept you grasp, is another step towards mastering this incredible field. You're building a toolkit that will help you understand the world around you in a deeper, more meaningful way. And that, my friends, is something to be incredibly proud of. You've got this! Go forth and conquer those statistics!